Close

Choose Marketing-Jive as your digital marketing agency and accelerate your business revenue growth with our award-winning digital marketing services and proven strategies. Request your proposal now to receive a free consultation, customized plan, pricing, and strategy guide!

We offer comprehensive solutions to help businesses small and large reach their goals, and have been doing so for over 10 years! Our team of digital marketing experts, SEO specialists, Google Ads managers, and web designers are here to help you reach your target audience and increase brand visibility!

Whether it's for online traffic increase, a higher conversion rate, or increased ROI, every business can benefit from a comprehensive digital marketing strategy. We have the skills and expertise to develop a custom-tailored strategy that will meet your business's needs and goals. From SEO to social media, we have a wide range of services that can benefit businesses such as:

Unfortunately, an estimated 1 in 5 small businesses still don’t utilize the power of digital marketing. However, it’s vital to understand just how powerful digital marketing can be for a business. With the right strategy, you can:

Of course, no two businesses are the same. That's why at Marketing-Jive, we believe a unique marketing strategy is essential for any business looking to succeed online. Our team of experienced professionals will take the time to get to know your business, understand your industry, and create a custom-tailored strategy that fits your values and mission.

For instance, if you're a restaurant, we can help create an SEO campaign targeted towards your type of cuisine and help take advantage of local SEO. If you're an ecommerce store, our team can help create an effective content marketing strategy to help boost organic traffic and build customer relationships. No matter what industry you run, the possibilities are endless when it comes to digital marketing.

It's not enough to simply understand the basics of digital marketing, you need to be able to implement it effectively as well. And at Marketing-Jive, that's exactly what we do. We provide some of the most comprehensive and effective digital marketing services available in the industry, such as:

Google processes an estimated 40,000 search queries every second, making SEO one of the most powerful tools in digital marketing. We can help you optimize your website to increase visibility and rank higher on search engines. When your customers search for the products and services you offer, you want your business to be at the top of the list thanks to our years of SEO experience.

If your business focuses on ecommerce, Google Ads can be a great way to increase profits. But, your profits can only be realized if you hire us to help lower costs and manage campaigns. Our team of experienced professionals will help you maximize your ad spend and drive more qualified leads to your website.

PPC is one of the most cost-effective forms of digital marketing. We can help you create and manage PPC campaigns that will target potential customers, allowing you to focus on the leads that matter most. We understand how to use targeted keywords, manage PPC budgets, and ensure your ads are seen by the right people at the right time.

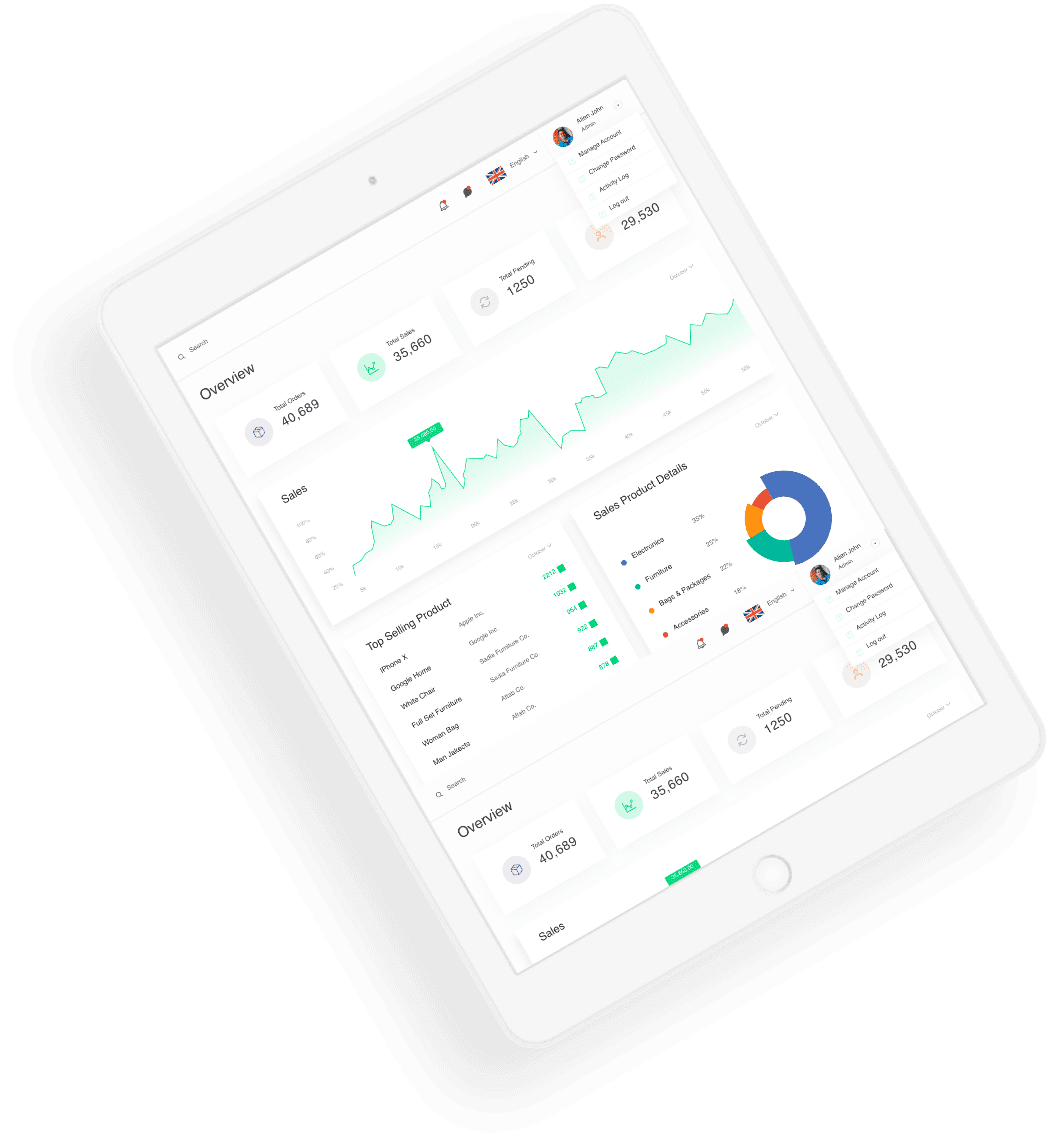

Nothing is better than a beautiful web design to help draw potential customers in. Our team of WordPress developers can help create a website that not only looks great, but functions perfectly too! We understand the power of web design when it comes to digital marketing and attracting customers, which is why we always strive to create amazing, clean, functional websites for our clients.

Are you a local brand looking to bring people to your physical store? Local SEO can be a great way to increase visibility online and make sure potential customers can find you! Our team of SEO experts know just how to optimize your website for local searches, giving you the edge over competitors.

In addition to our beautiful web design and our comprehensive SEO services, Marketing-Jive provides a range of other digital marketing services that our customers love. You can take advantage of our services in content marketing, email marketing, social media marketing and much more! No matter what kind of business you run or what industry you specialize in, our other services can also help your business thrive, including:

Are your online search results not up to par? Our team of reputation management experts can help get your business back on track and increase customer trust. We understand how essential it is to have a good reputation and will make sure you get it back as quickly as possible. Your online reputation influences everything, from PPC to SEO, so take advantage of our services to keep it positive.

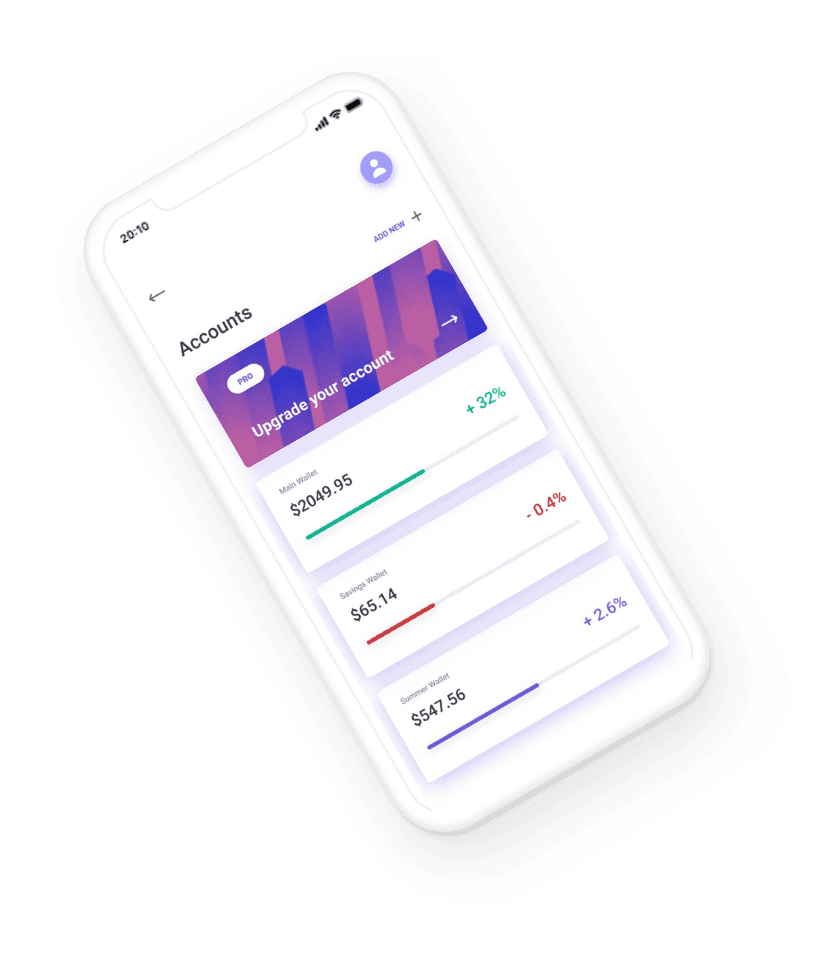

The world is mobile, and you want your digital marketing strategy to be as well. Our team of experienced mobile marketing professionals can help you create campaigns and optimize your website for mobile devices, making sure potential customers can find you no matter what devices they use.

Social media is now an essential tool for businesses to reach potential customers. Our team of social media marketing experts will help you create campaigns and content that appeals to your target audience on various social media platforms. From Tweets, to Instagram posts, to Facebook ads, we have you covered.

Written content is just as important as visuals when it comes to digital marketing and increasing brand visibility. Our team can help you create content that is engaging and optimized for SEO purposes. We will help you create content calendars, brainstorm topics, and more! Become a leader in your industry and stand out from the competition with content that speaks to your customers, is optimized for search engines, and drives results.

Nothing beats working with professionals who can make your business thrive. Our team of experienced professionals is dedicated to helping you reach your goals and make the most out of your digital marketing efforts. We provide the best services around and work closely with our clients to create effective campaigns that drive results. While other digital marketing agencies might be content to offer basic services, we go above and beyond to ensure you get the best service possible. Our customers love our comprehensive services and commitment to excellence, as well as our:

Ready to take your business’ digital marketing efforts to the next level? Contact our team today and let us know what you need. We can provide you with customized solutions that meet your specific needs and will help you achieve online growth and success faster than ever before!

Combining data-driven insights, detailed analytics, and over 10 years of experience, we are the team you can trust for your digital marketing needs. Contact us now and let’s get started growing together!

Adding {{itemName}} to cart

Added {{itemName}} to cart